Automatic dust correction in digital photographs: How DxO PureRAW 6 uses deep learning to find and remove dust spots

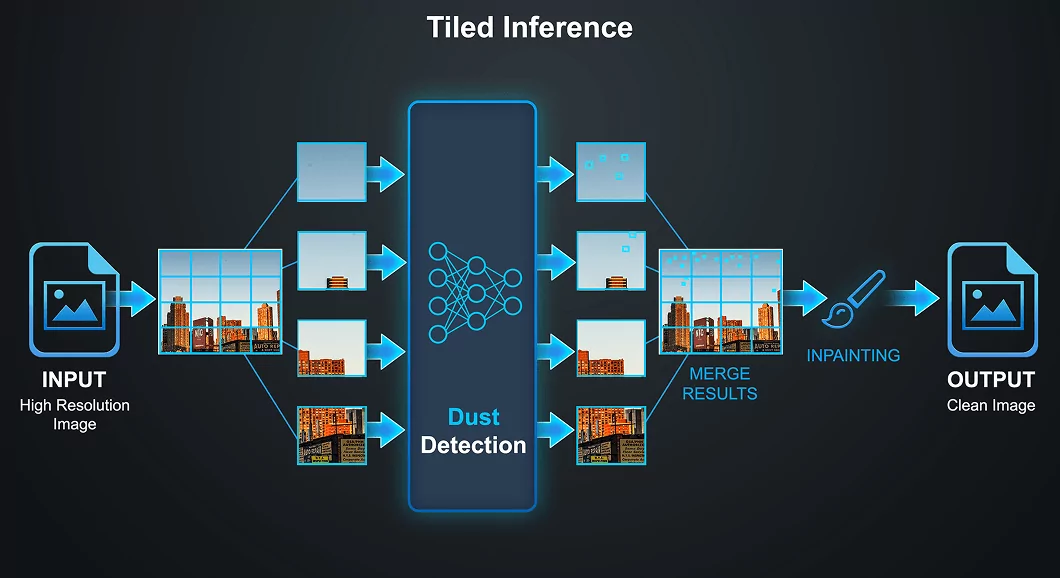

DxO PureRAW 6 introduces automatic dust detection and removal: a single click identifies dust spots across the entire image and erases them, automating a tedious manual process. The feature combines a state-of-the-art object detection neural network with DxO's proven inpainting engine.

Key benefits for users

- Fully automatic workflow. Dust detection and removal is a single checkbox. Batch-process an entire shoot, and every image comes out clean.

- Adjustable sensitivity. A slider lets the user balance between catching every possible spot (high sensitivity) and avoiding the risk of false positives (low sensitivity).

(That said, we still recommend cleaning your gear from time to time. 😉)

The problem

Interchangeable-lens cameras tend to accumulate dust on their sensor or lenses. These particles cast small, soft shadows in your images — most visible in smooth, uniform areas such as skies and studio backdrops.

Photographers have long dealt with this in post-processing, using repair, heal, and retouch brushes. On heavily affected images or when processing a high volume of images, this quickly becomes tedious.

DxO PureRAW 6 automates this process. A detection algorithm scans the image for dust spots, and an inpainting algorithm erases each one automatically.

Why dust detection is challenging

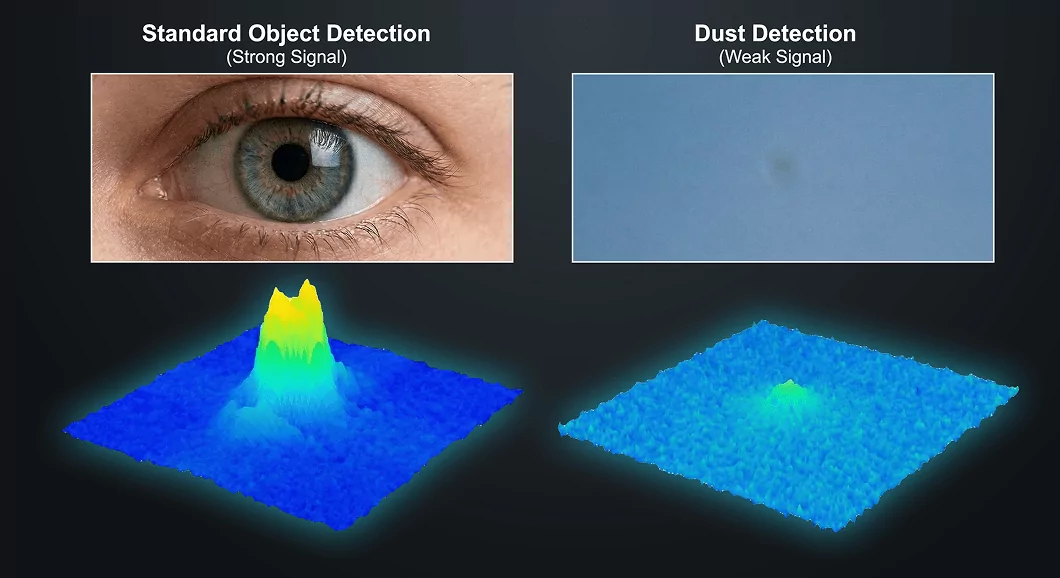

At first glance, sensor dust seems easy to describe: small, dark, roughly circular blobs. But the apparent simplicity is deceptive. Several properties make robust detection surprisingly difficult.

Extreme subtlety. Most dust spots attenuate only a small fraction of the incoming light — often just 5 to 20 percent. They are faint stains, not opaque blots, and their visibility depends heavily on the underlying image content.

Tiny spatial extent. At full resolution, a typical dust spot spans only a handful of pixels — small enough that general-purpose object detectors, which are optimized for people or cars, struggle to register them.

No rich structure. Unlike the objects that mainstream detectors excel at — a face with eyes, nose, and mouth; a car with wheels and windows — a dust spot offers almost nothing for a neural network to latch onto. It is, in essence, a faint dark smudge.

Enormous variability. The appearance of a dust spot depends on the size and shape of the particle, its distance from the sensor surface, the lens aperture, and the color and brightness of the underlying scene. Some spots are sharp-edged circles; others are soft, diffuse halos. Some appear nearly black against a bright sky; others are barely distinguishable from noise. The diversity is far greater than a casual glance would suggest. Dependency on aperture and scene means that the same physical particle can look quite different from one photograph to the next.

The detection model: RF-DETR

The heart of the feature is RF-DETR, a transformer-based object detection architecture. We evaluated several detection architectures, including multiple generations of CNN-based models. RF-DETR was selected for a combination of reasons:

State-of-the-art accuracy. RF-DETR achieves top scores on standard object detection benchmarks, outperforming many well-known alternatives.

Multiple model sizes. Nano, Small, Medium, Large, and XL variants allow us to choose the best trade-off between accuracy and computational cost. We selected the Medium variant (33 million parameters).

Resolution-agnostic architecture. RF-DETR contains no fully connected layers that would fix the input resolution. This flexibility is important for our tiled inference strategy: the image is divided into overlapping 512×512 pixel patches, and the detection model runs independently on each patch. Results are then merged across the full image.

In standard benchmarks, RF-DETR detects dozens of object categories — people, vehicles, animals, furniture. For our use case, we retrained the model to recognize a single class: dust spot. The challenge lies not in classification but in detection — finding tiny, low-contrast features in a vast image.

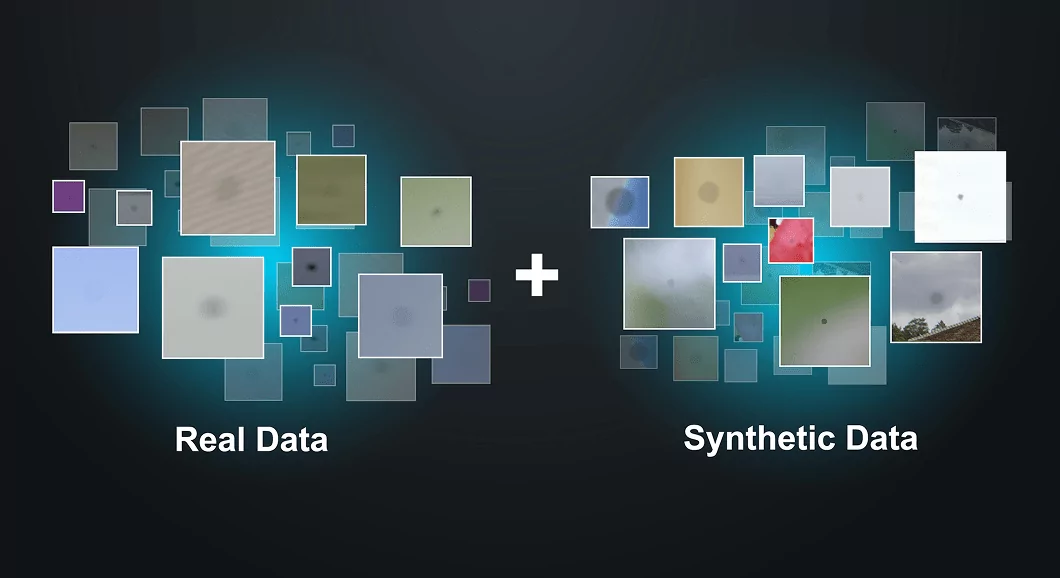

Training data

Training a reliable dust detector requires exposing the network to a very large number of examples covering every conceivable combination of dust shape, opacity, blur, and background.

We started by collecting thousands of real photographs with genuine dust spots, all carefully labelled by hand. This real-world dataset already covers a great diversity of dust shapes, sizes, opacity, blurriness, and backgrounds, but we wanted to go further.

With its expertise in image and signal processing, our research team developed a dust synthesizer: a compact algorithm that generates a dust spot — indistinguishable from a real one — and composites it onto a random photographic or synthetic background. The synthesizer models the key physical properties of real dust: the irregular blob shape, the per-channel light attenuation in linear space, the blur that softens the edges, and the optional directional shading that some particles exhibit. Every parameter is randomized within carefully calibrated ranges derived from statistical analysis of real dust spots.

This synthetic approach ensures even distribution of dust characteristics and backgrounds throughout the training set, avoiding the biases that inevitably arise in any manually collected dataset. It guarantees, for example, that the network sees enough very faint spots, enough very small spots, and enough unusual backgrounds — combinations that would be underrepresented in a purely real-world collection.

In total, our dust detection network has seen approximately one million dust spots — a mix of real and synthetic — during its training.