DeepPRIME XD3: Fourth-generation AI denoising and demosaicing

DxO PureRAW6 introduces DeepPRIME XD3 for Bayer sensors, the latest generation of DxO's deep-learning engine for raw image processing. A single neural network now performs three tasks simultaneously — denoising, demosaicing, and chromatic aberration correction — delivering images with even finer detail than its predecessor.

The technology rests on three pillars: a new multi-task formulation that adds chromatic aberration correction to the network's responsibilities, an optimized convolutional architecture discovered through extensive research, and a significantly improved training pipeline that closes the gap between synthetic training data and real-world RAW images.

Key benefits

- Better image quality. Cleaner color reconstruction, finer detail, and fewer artifacts, especially on high-frequency textures and edges, and particularly on recent sensors without an optical anti-aliasing filter.

- Same processing speed. Despite a substantially more capable network, DeepPRIME XD3 runs as fast as DeepPRIME XD2s on consumer hardware.

- Broad compatibility. DeepPRIME XD3 unites all our recent advances in RAW image processing, and it now supports all sensor types.

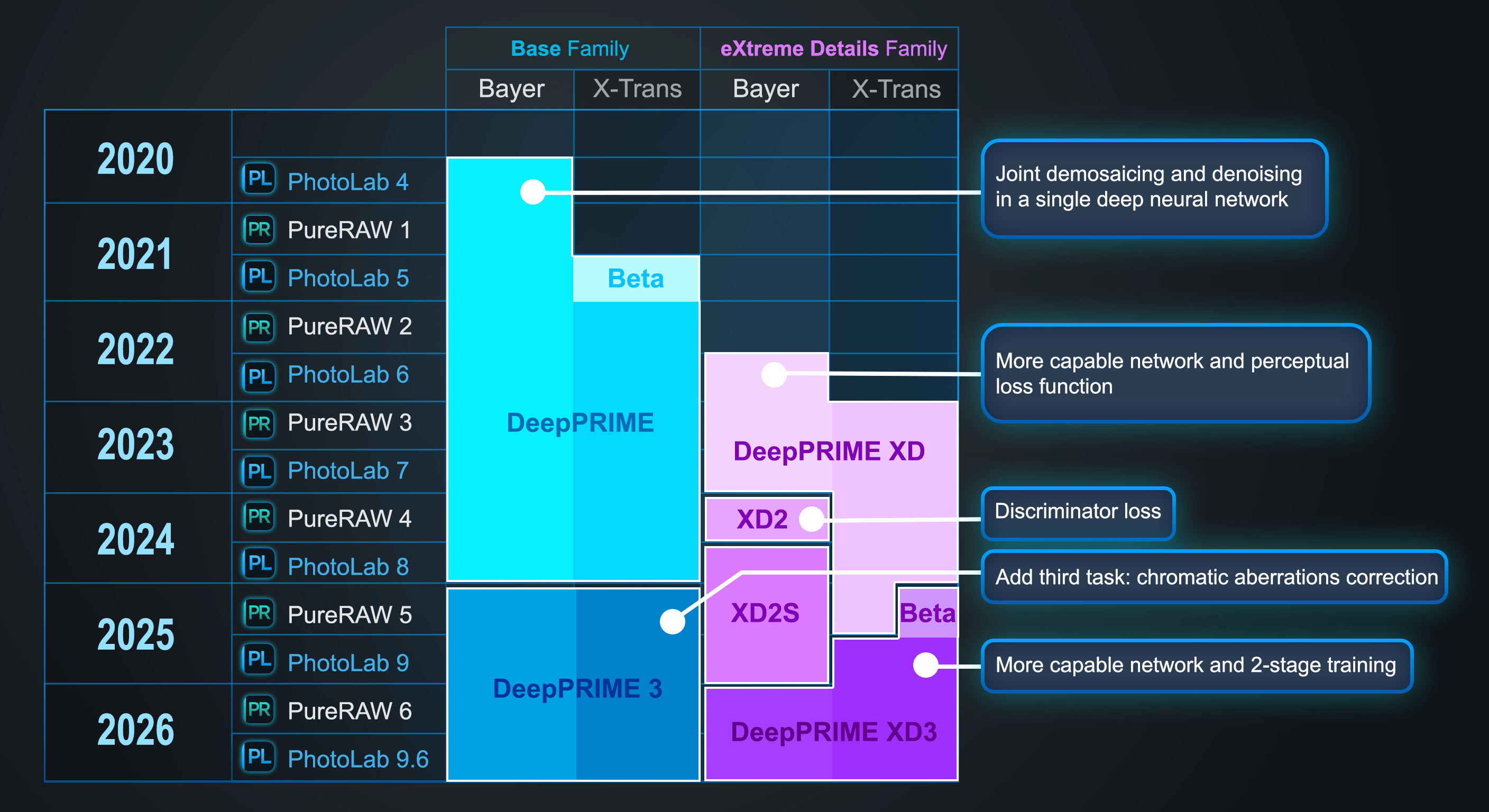

A six-year journey

Raw conversion — the process of turning a camera sensor's mosaic of noisy single-color samples into a full-color photograph — has been at the heart of DxO's expertise for over two decades. In 2020, DxO introduced DeepPRIME, the first commercially available neural network to perform denoising and demosaicing jointly in a single pass.

Since then, we have worked relentlessly to push quality further. Deep learning and this holistic approach were also what allowed us to finally support X-Trans sensors, a variant found in part of Fujifilm's camera lineup. These sensors had never been supported by our classical denoisers. In 2022, we introduced the "XD" (eXtreme Detail) family — a second tier of DeepPRIME engines that reach for the highest possible image quality, at the cost of significantly heavier computation that demands a powerful GPU — or a good measure of patience.

2020 — DxO PhotoLab4

DeepPRIME. Joint denoising and demosaicing in a single deep neural network (Bayer only).

2022 — DxO PureRAW 2

DeepPRIME extends to X-Trans sensors.

2022 — DxO PhotoLab6

DeepPRIME XD ("eXtreme Detail"). More capable architecture and perceptual loss function, encouraging finer detail (Bayer only).

2023 — DxO PureRAW 3

DeepPRIME XD extends to X-Trans sensors.

2024 — DxO PureRAW 4

DeepPRIME XD2. Adversarial discriminator loss for a more natural rendering (Bayer only).

2024 — DxO PhotoLab8

DeepPRIME XD2s. Improved noise calibration for selected camera bodies.

2025 — DxO PureRAW 5

DeepPRIME 3. Three joint tasks: denoising, demosaicing, and chromatic aberration correction (Bayer and X-Trans).

2025 — DxO PhotoLab9

DeepPRIME XD3. More capable architecture and two-phase training (X-Trans only).

2026 — DxO PureRAW 6

DeepPRIME XD3 extends to Bayer sensors.

Focusing on X-Trans first during the development of DeepPRIME XD3 was a natural choice: the X-Trans version of DeepPRIME XD was older and easier to surpass than DeepPRIME XD2s, which Bayer users already enjoyed. But it led to a somewhat complex situation for the latter. On most images, DeepPRIME XD2s delivered the highest quality, yet on certain low-ISO images affected by chromatic aberrations, DeepPRIME 3 could actually yield better results. The release of DeepPRIME XD3 for Bayer sensors finally brings us back to a simplicity we had not enjoyed since 2023: whatever camera you use, you can choose between two RAW conversion networks — one that strikes a balance between speed and image quality, and one that reaches for the utmost in image quality.

The RAW image restoration challenge

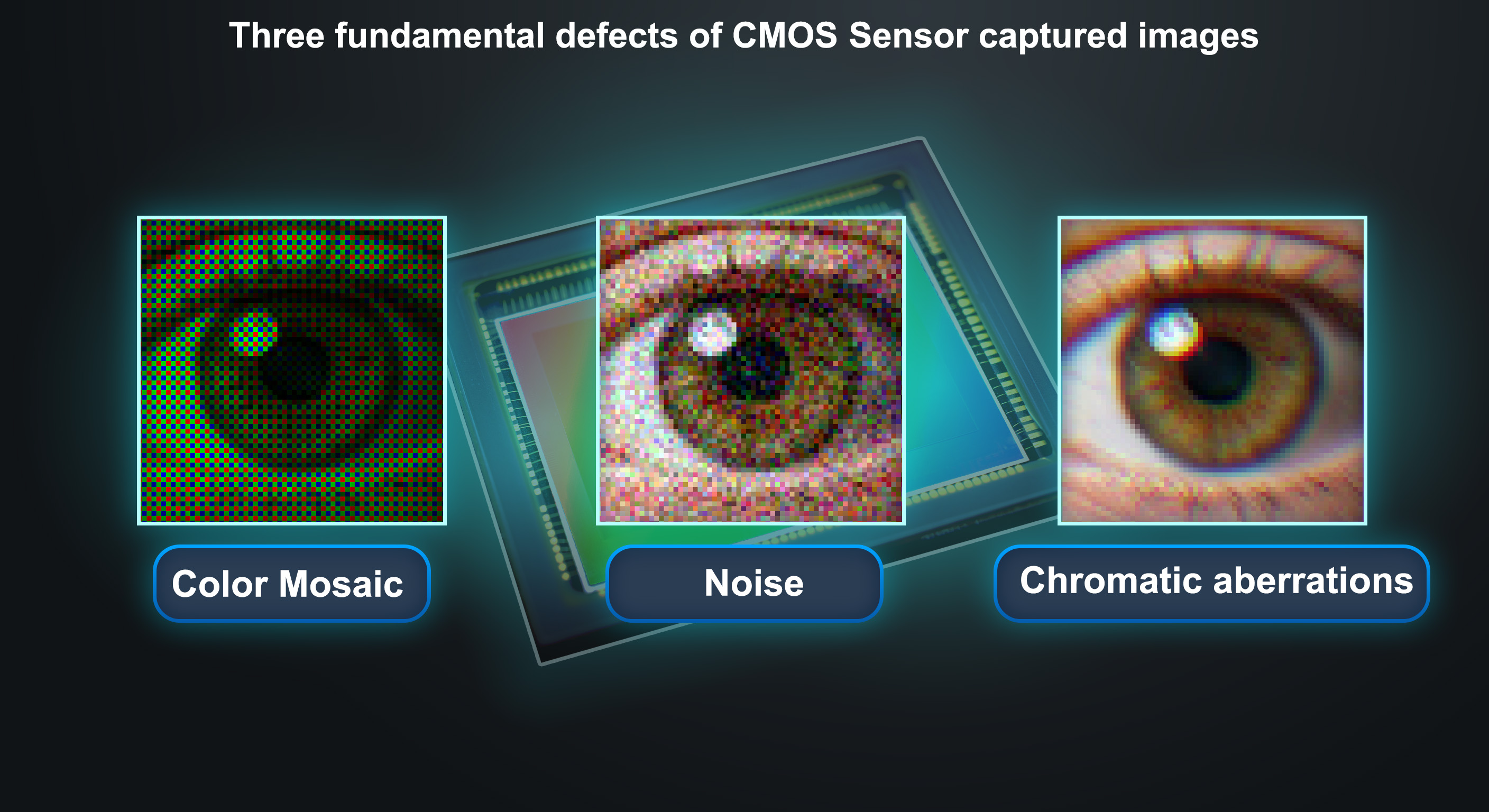

Every digital image captured by a CMOS sensor contains three fundamental defects, all introduced before any software touches the pixels:

Color mosaic. The sensor does not capture full color at each pixel. Instead, a grid of tiny color filters lets each photosite record only one of three colors (red, green, or blue). Reconstructing the two missing color values at every pixel is the task of demosaicing. Two filter patterns are common in digital photography: Bayer, used by approximately 95% of all digital cameras, and X-Trans, found in the remaining 5%.

Sensor noise. Each photosite collects a random number of photons. The resulting shot noise is an inescapable property of light itself, and electronic read noise compounds it further. At high ISO sensitivities, noise can obscure fine detail entirely.

Chromatic aberrations. Most lenses don't focus all wavelengths of light to exactly the same point. The result is small lateral shifts between the red, green, and blue channels, visible as colored fringes along high-contrast edges.

Traditional RAW processing treats these three problems independently: a demosaicing algorithm interpolates the missing colors, a separate denoiser suppresses noise, and a third module corrects chromatic aberrations. Each module works in isolation, unaware of the others' decisions, and each can introduce its own artifacts that complicate the next step. DxO's approach, starting with DeepPRIME in 2020, has always been to solve multiple problems jointly in a single neural network. With DeepPRIME XD3, that principle now extends to all three defects.

Three defects, one network

The case for solving denoising, demosaicing, and chromatic aberration correction jointly is a matter of fundamental interdependence.

Consider what happens when these tasks are separated. Denoising a RAW image requires some understanding of how the mosaic pattern relates to the underlying scene — essentially, an implicit demosaicing on the fly. Conversely, demosaicing a noisy image requires the ability to see structure through the noise — essentially, an implicit denoising — because distinguishing a real edge from a noise fluctuation is critical for correct color interpolation. And demosaicing an image affected by chromatic aberrations is very nearly the same problem as correcting those aberrations: if the red, green, and blue channels are laterally shifted relative to one another, then reconstructing the correct color at each pixel requires imagining what the image would look like if the channels were aligned.

Splitting these three tasks across three separate networks — even networks trained to cope with the artifacts produced by the previous stage — would require more weights and more computation globally, because each network would need to internally replicate part of the intelligence of the others. The result would be longer processing times for equivalent quality, or lower quality for equivalent speed.

A single network, by contrast, can share internal representations across all three tasks. The features it learns to detect edges for demosaicing also help it distinguish signal from noise and identify lateral chromatic shifts.

Synthetic training data

A neural network is only as good as the data it learns from. For DeepPRIME XD3, the quality and realism of the training data are every bit as important as the architecture of the network itself.

The training data problem

When research on DeepPRIME began at DxO in 2018, a fundamental question was: how do we obtain the training examples that a supervised neural network needs — pairs of degraded input images and their corresponding perfect originals?

All options were on the table. Taking pairs of real photographs — a clean, low-ISO shot alongside a noisy, high-ISO shot of the same scene — seemed natural, but proved impractical: the two exposures never align perfectly, moving subjects cause inconsistencies, and the approach would have to be repeated for every camera body and every ISO sensitivity DxO supports. The noise-to-noise approach, which substitutes burst sequences for clean references, suffers from similar scaling limitations. And classical labeling — the backbone of most supervised learning — is simply impossible here: no human can look at a noisy mosaic of single-channel pixel values and propose the correct full-color, noise-free output for billions of pixels.

That left synthetic data generation: starting from pristine, high-quality photographs and simulating the defects that a real camera sensor would introduce. Each training example is thus a pair: a synthetically degraded image, and the original pristine version serving as ground truth. On paper, this is the most scalable solution by far. DxO supports over 600 camera bodies across roughly 20 ISO settings each, creating over 12,000 possible configurations. And this figure accounts only for noise: chromatic aberrations depend on the lens, the aperture, the zoom setting, and the focusing distance. If we wanted to capture real image pairs for every camera–ISO–lens combination, the number of configurations would explode into the millions. Synthetic generation can cover all of them from the same pool of ground-truth images.

The distribution gap

The challenge with synthetic data is a phenomenon known as the distribution gap: the statistical difference between the simulated training images and the real RAW files the network will encounter in production.

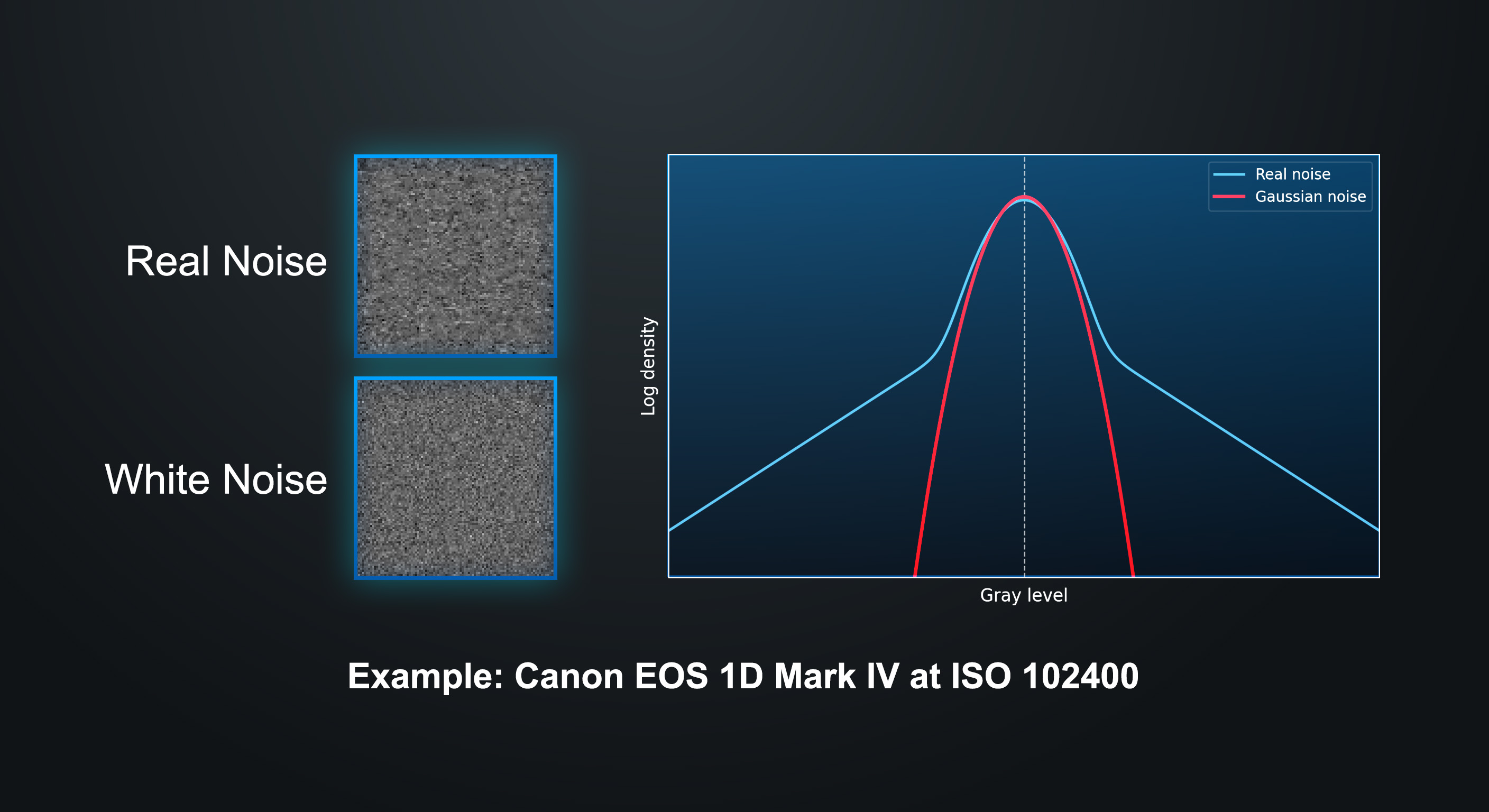

A naïve simulation — shifting color channels slightly to mimic chromatic aberrations, removing two color values out of three to simulate the Bayer mosaic, then adding white Gaussian noise — is enough to generate the above illustrations for this white paper. It is not enough to train a neural network. A network trained on such idealized data would perform well on synthetic images drawn from the same simulation, including images it has never seen during its training, but it would fail on real RAW files from real cameras.

Real RAW images differ from a naïve simulation in countless ways:

Noise is not purely white Gaussian. Photon shot noise is indeed white and signal-dependent, guaranteed by the physics of light. But real sensor data is a mixture of photonic and electronic noise. Electronic noise — read noise, dark current, banding — can exhibit spatial correlations, non-Gaussian tails, and fixed patterns that vary from one sensor design to the next.

Chromatic aberrations vary across the field. Lateral color shifts are not uniform — they change in magnitude and direction from the center of the image to the corners, following the optical properties of each specific lens.

"RAW" files are not truly RAW. Before the data is written to the memory card, the camera applies a series of in-camera processing steps that alter the signal: black level correction, fixed-pattern noise subtraction, static defective pixel correction, focus pixel interpolation. Some manufacturers go further and apply lossy compression or even noise reduction to what they label as RAW data.

Sensor behavior changes with usage. Noise characteristics can shift depending on sensor temperature, shutter mode (mechanical vs. electronic), and other operating conditions. All of this varies across manufacturers and across camera generations. Manufacturers do not document their internal processing. We must infer what they do based on careful observation.

Closing the gap

Since 2018, DxO has leveraged everything at its disposal to minimize the distribution gap: two decades of expertise in image signal processing and, crucially, a proprietary calibration database that has no equivalent in the industry. For every supported camera body, at every ISO setting, DxO's laboratory has captured and analyzed calibration images — both photographic content and dark frames — to characterize not just the standard deviation of the noise, but its full statistical profile: its distribution, any spatial correlations introduced by in-camera processing, and how these properties change across the sensor and across operating conditions. This database, originally built to feed DxO's classical denoising algorithms, turned out to be an invaluable foundation for training neural networks.

Sometimes, however, some cameras reveal gaps that the existing simulation does not cover. A recent example illustrates the challenge: Fujifilm's X-Trans sensors of the 4th and 5th generations, where something changed relative to the first three generations. Despite extensive efforts, our DeepPRIME XD2 training pipeline never managed to produce satisfactory results for these sensors, which is why DeepPRIME XD2 and XD2s were released as Bayer-only.

For DeepPRIME XD3, properly supporting these sensors was a top priority. Over months of investigation, the team dissected how the newer X-Trans sensors differed from their predecessors and systematically adjusted the training data synthesis until the distribution gap became small enough for the network to generalize well to real images from these cameras.

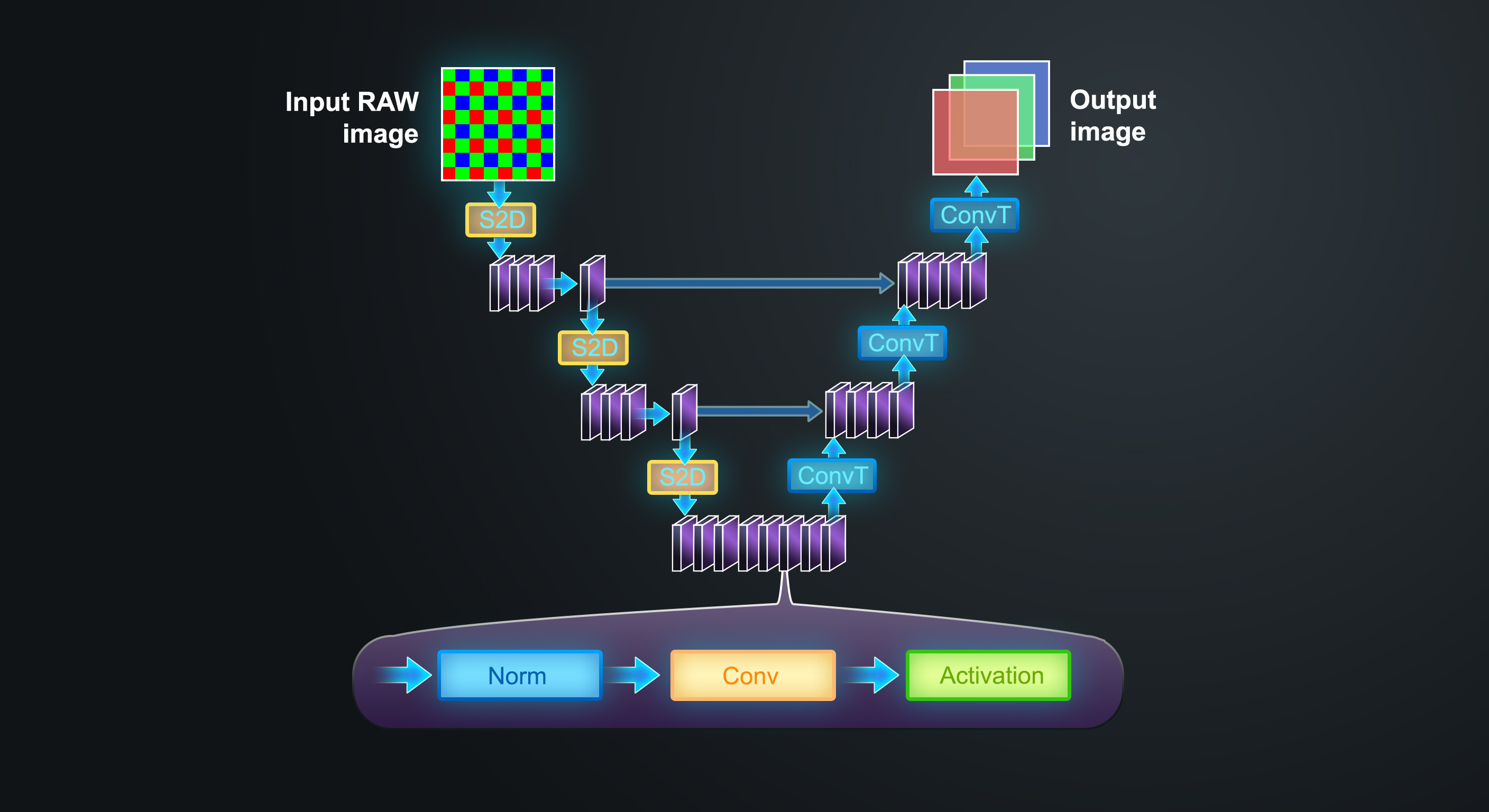

Finding the best architecture

Adding a third task and demanding better demosaicing quality required a more capable network. The team began with a broad exploration. Transformer architectures, which dominate many fields of deep learning today, were tested alongside multiple convolutional neural network (CNN) designs. For this particular task — recovering fine, local image detail from noisy and incomplete data — CNNs proved more effective. Their inherent local bias, which focuses on small spatial neighborhoods, naturally encourages the smoothing of noise without hallucinating structure that is not there. Transformers, which model long-range dependencies, tended to let noise through rather than suppress it. For a denoiser, the CNN's bias toward local regularity is a feature, not a limitation.

An early prototype of DeepPRIME XD3 achieved the desired quality, but ran three times slower than DeepPRIME XD2s — too slow for a production tool used on thousands of images. The challenge, then, was to find an architecture that could be just as intelligent while fitting within the same computational budget. The team explored different convolutional block designs, separable convolutions in place of the full 3D convolutions used in earlier generations, different activation functions, and varying amounts of computation allocated to each scale of the U-Net.

Each candidate architecture was trained for approximately three weeks on an Nvidia H100 GPU. Around 50 configurations were evaluated in total, amounting to roughly three years of cumulative H100 GPU time dedicated solely to architecture exploration.

This entire process was carried out twice: first for X-Trans, then for Bayer. This is the principal reason why the Bayer version arrives only now in DxO PureRAW 6, while the X-Trans version was already released six months earlier in DxO PhotoLab9.

The outcome is a network with significantly more parameters than DeepPRIME XD2s, arranged in a way that keeps inference time essentially the same on consumer hardware. More weights, more intelligence, but no significant penalty in processing speed.

Renoising, rethought

Almost twenty years ago, DxO's researchers made an observation that still holds today: it is very difficult to make a denoiser remove only part of the noise. Denoisers — from the earliest wavelet and non-local means filters to modern neural networks — generally perform best when asked to remove all noise. Attempting partial removal tends to produce artifacts. The better the denoiser, the more detail it preserves in the process, but even the best denoisers inevitably erase some fine structure along with the noise.

To avoid the "plastic" look that results from fully denoised images, our researchers devised a simple but effective technique: let the denoiser do its job completely, then add a small fraction of the removed noise back to the image. Reintroducing part of the original noise, rather than synthetic white noise, has a crucial advantage — it also reintroduces part of the fine detail that was lost in the process. The first product to feature this technique was DxO OpticsPro 5, released in 2008. Even though DeepPRIME XD3 is vastly more capable than the denoising and demosaicing algorithms of that era, the principle remains as valid as ever.

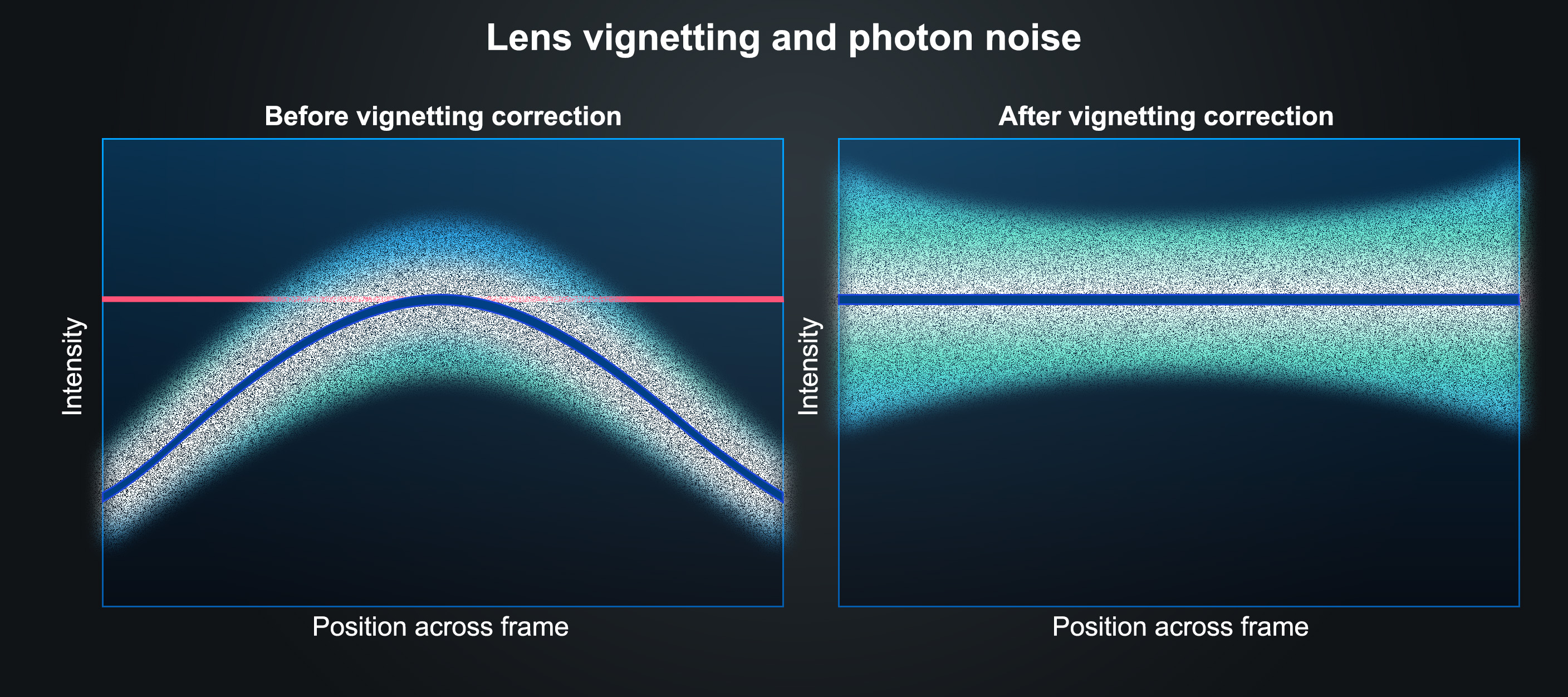

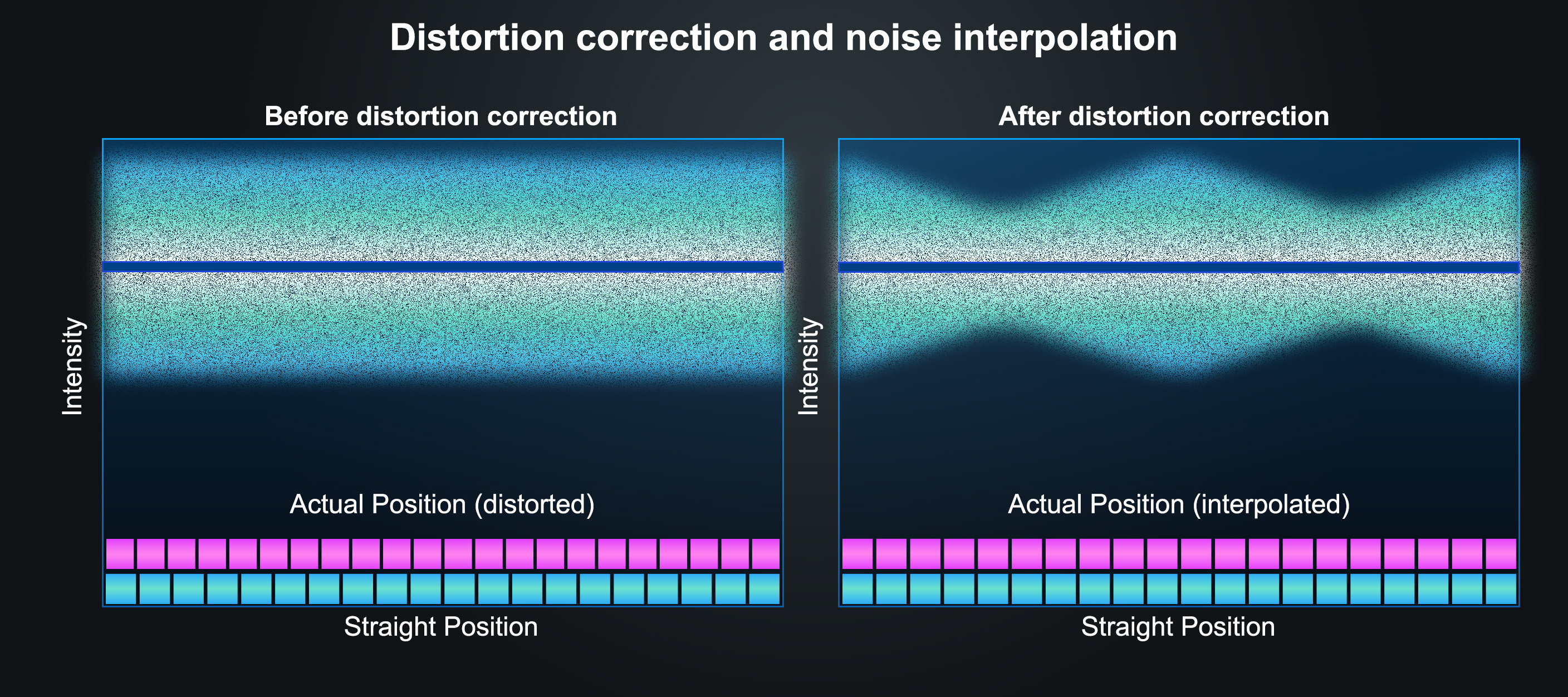

For DxO PureRAW 6, we reworked how this noise reintroduction interacts with our lens corrections, specifically with vignetting and distortion correction. Both corrections are now applied before adding the residual noise back to the image, which allows us to treat the main signal and the noise component differently.

Vignetting. The noise level in RAW images depends on the signal level in a nonlinear way. With a lens that exhibits strong vignetting, the signal-to-noise ratio decreases significantly in the corners. When we amplify the corners to produce a uniformly bright image, we also amplify the noise, leaving it visibly stronger than in the center. The solution is to use the noise model — the known relationship between signal level and noise level — to derive a correction factor that produces homogeneous noise across the frame, and to apply this factor to the noise before adding it back.

Distortion. Distortion correction requires geometric interpolation of the pixel grid. When applied to white noise, interpolation introduces two unwanted effects: it creates spurious structure in the noise, and it causes periodically varying noise levels. At positions where the interpolated coordinate coincides with a real pixel, the noise is preserved as-is, while at positions that fall between pixels, the noise is smoothed and its level drops. In DxO PureRAW 6, we address this by applying a specialized interpolation algorithm to the noise component separately, ensuring that its level remains uniform after distortion correction.

Both effects are most visible at high ISO settings, where the residual noise — even though it is only a fraction of the original — is clearly perceptible.

This improved renoising pipeline applies to both DeepPRIME 3 and DeepPRIME XD3. It is a good example of how much we care about the details: our ambition is not "only" to build the world's best denoiser, but the world's best RAW conversion engine.

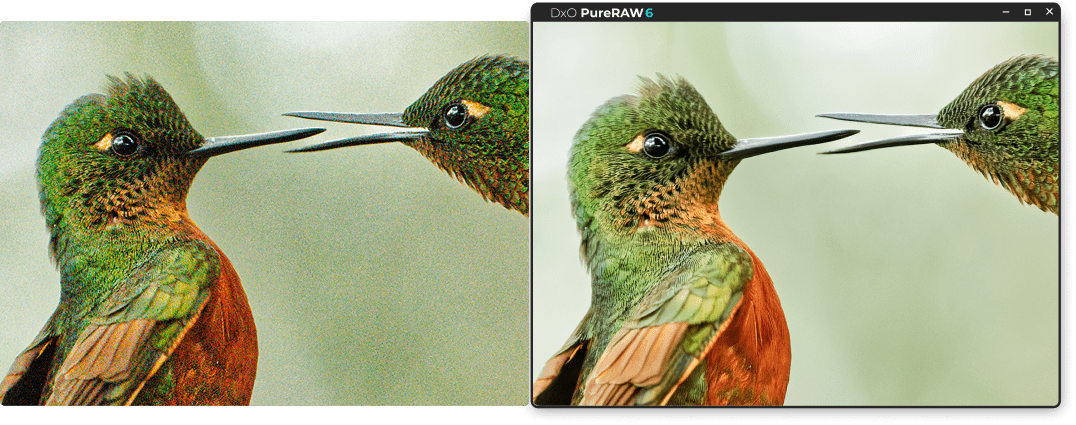

The results

The practical effect of all these advances depends on image content and shooting parameters. Compared to DeepPRIME XD, which DeepPRIME XD3 replaces for X-Trans sensors, the new engine generally yields cleaner, more natural results. Compared to DeepPRIME 3, it almost always produces images that are both cleaner and more detailed, at all ISO sensitivities. The difference with DeepPRIME XD2s is more subtle: DeepPRIME XD3 shows its advantage most clearly on images with fine textures, sharp lenses, sensors without an optical anti-aliasing filter, and lenses exhibiting chromatic aberration. Improvements in demosaicing and chromatic aberration correction are best visible at low ISO, while improved detail preservation is most apparent at intermediate to high ISO settings.